by Dr. Ed Nuhfer, California State Universities (retired)

What if you could do an assessment that simultaneously revealed the student content mastery and intellectual development of your entire institution, and you could do so without taking either class time or costing your institution money? This blog offers a way to do this.

We know that metacognitive skills are tied directly to successful learning, yet metacognition is rarely taught in content courses, even though it is fairly easy to do. Self-assessment is neither the whole of metacognition nor of self-efficacy, but self-assessment is an essential component to both. Direct measures of students’ self-assessment skills are very good proxy measures for metacognitive skill and intellectual development. A school developing measurable self-assessment skill is likely to be developing self-efficacy and metacognition in its students.

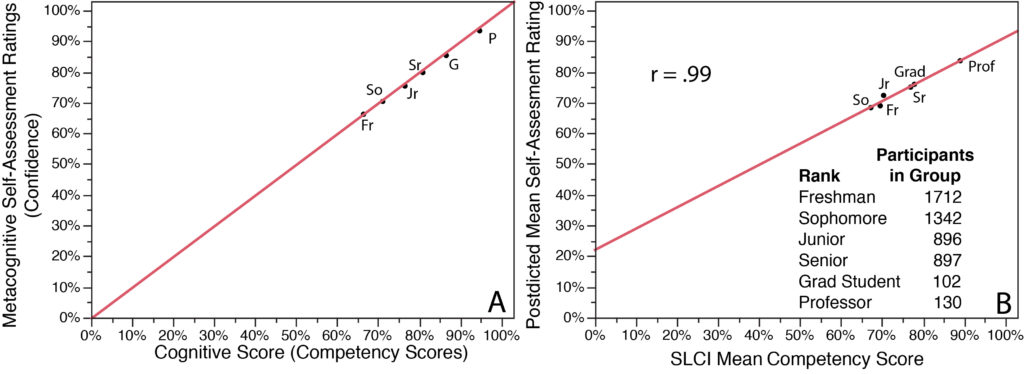

This installment comes with lots of artwork, so enjoy the cartoons! We start with Figure 1A, which is only a drawing, not a portrayal of actual data. It depicts an “Ideal” pattern for a university educational experience in which students progress up the academic ranks and grow in content knowledge and skills (abscissa) and in metacognitive ability to self-assess (ordinate). In Figure 1B, we now employ actual paired measures. Postdicted self-assessment ratings are estimated scores that each participant provides immediately after seeing and taking a test in its entirety.

Figure 1. Academic ranks’ (freshman through professor) mean self-assessed ratings of competence (ordinate) versus actual mean scores of competence from the Science Literacy Concept Inventory or SLCI (abscissa). Figure 1A is merely a drawing that depicts the Ideal pattern. Figure 1B registers actual data from many schools collected nationally. The line slopes less steeply than in Fig. 1A and the correlation is r = .99.

The result reveals that reality differs somewhat from the ideal in Figure 1A. The actual lower division undergraduates’ scores (Fig. 1B) do not order on the line in the expected sequence of increasing ranks. Instead, their scores are mixed among those of junior rank. We see a clear jump up in Figure 1B from this cluster to senior ranks, a small jump to graduate student rank and the expected major jump to the rank of professors. Note that Figure 1B displays means of groups, not ratings and scores of individual participants. We sorted over 5000 participants by academic rank to yield the six paired-measures for the ranks in Figure 1B.

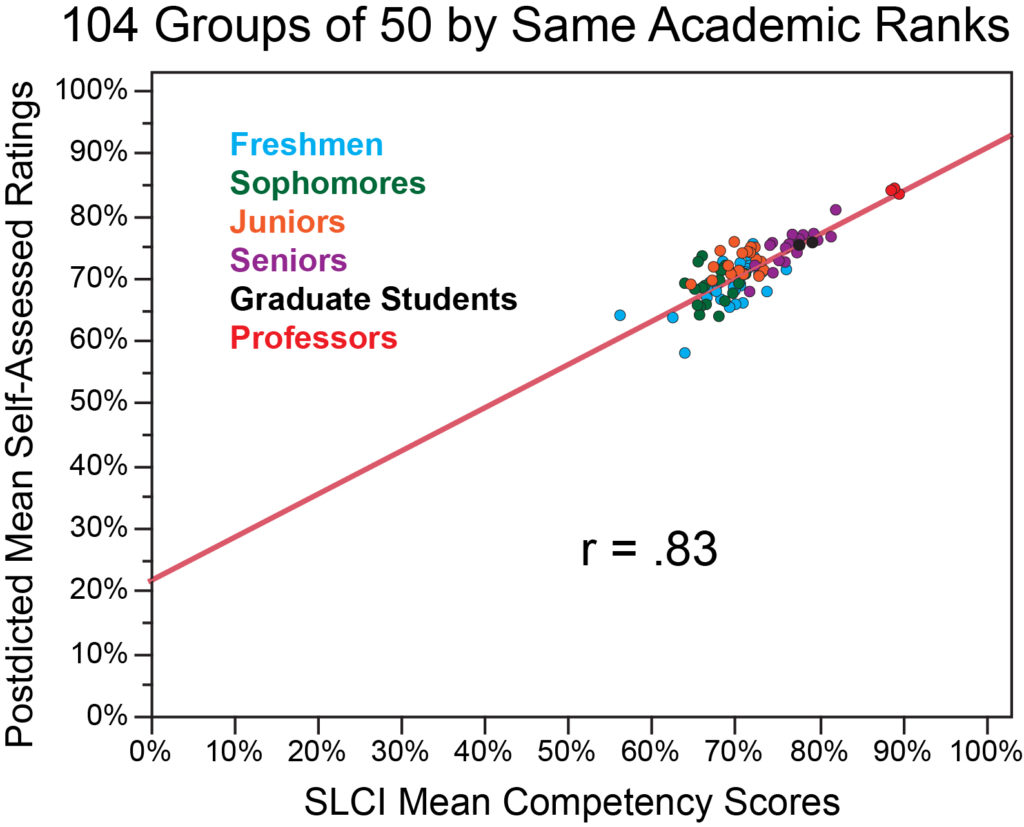

We underscore our appreciation for large databases and the power of aggregating confidence-competence paired data into groups. Employment of groups attenuates noise in such data, as we described earlier (Nuhfer et al. 2016), and enables us to perceive clearly the relationship between self-assessed competence and demonstrable competence. Figure 2 employs a database of over 5000 participants but depicts them in 104 randomized (from all institutions) groups of 50 drawn from within each academic rank. The figure confirms the general pattern shown in Figure 1 by showing a general upwards trend from novice (freshmen and sophomores), developing experts (juniors, seniors and graduate students) through experts (professors), but with considerable overlap between novices and developing experts.

Figure 2. Mean postdicted self-assessment ratings (ordinate) versus mean science literacy competency scores by academic rank. Figure 2 comes from selecting random groups of 50 from within each academic rank and plotting paired-measures of 104 groups.

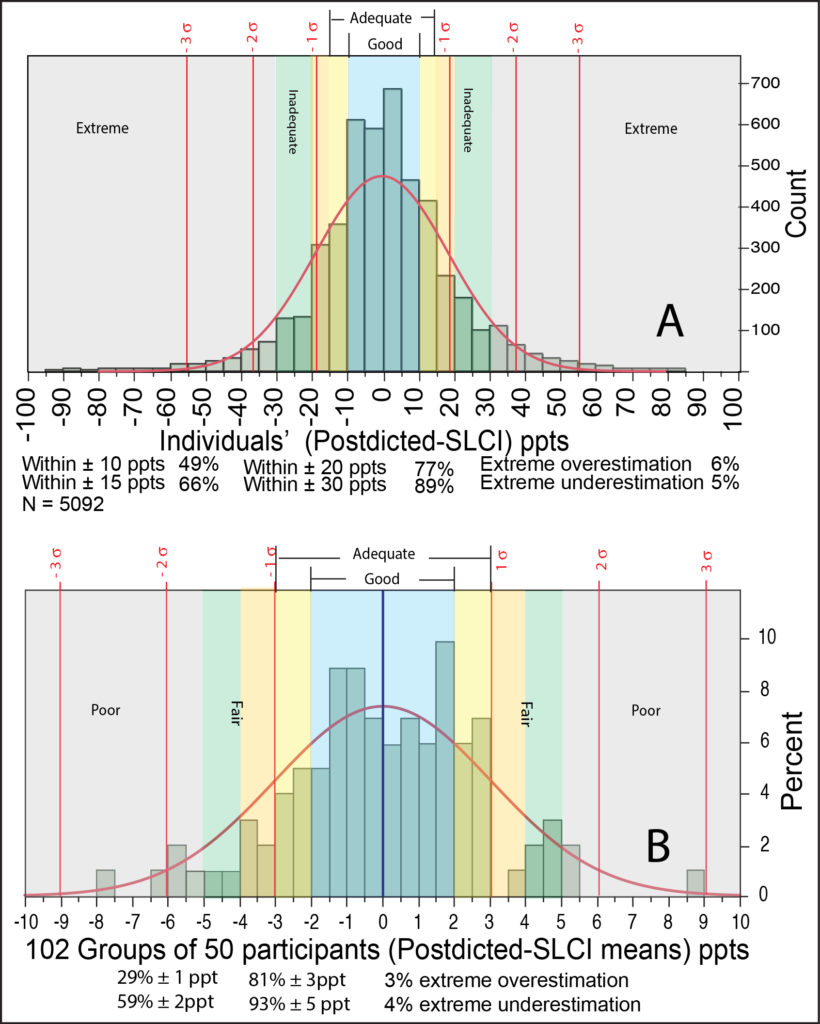

The correlations of r = .99 seen in Figure 1B have come down a bit to r = .83 in Figure 2. Let’s learn next why this occurs. We can understand what is occurring by examining Figure 3 and Table 1. Figure 3 comes from our 2019 database of paired measures, that is now about four times larger than the database used in our earlier papers (Nuhfer et al. 2016, 2017), and these earlier results we reported in this same kind of graph continue to be replicated here in Figure 3A. People generally appear good at self-assessment, and the figure refutes claims that most people are either “unskilled and unaware of it” or “…are typically overly optimistic when evaluating the quality of their performance….” (Ehrlinger, Johnson, Banner, Dunning, & Kruger, 2008).

Figure 3. Distributions of self-assessment accuracy for individuals (Fig. 3A) and of collective self-assessment accuracy of groups of 50 (Fig. 3B).

Note that the range in the abscissa has gone from 200 percentage points in Fig 3A to only 20 percentage points in Fig. 3B. In groups of fifty, 81% of these groups estimate their mean scores within 3 ppts of their actual mean scores. While individuals are generally good at self-assessment, the collective self-assessment means of groups are even more accurate. Thus, the collective averages of classes on detailed course-based knowledge surveys seem to be valid assessments of the mean learning competence achieved by a class.

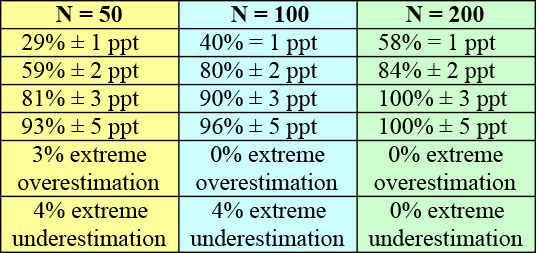

The larger the groups employed, the more accurately the mean group self-assessment rating is likely to approximate the mean competence test score of the group (Table 1). In Table 1, reading across the three columns from left to right reveals that, as group sizes increase, greater percentages of each group converge on the actual mean competency score of the group.

Table 1. Groups’ self-assessment accuracy by group size. The ratings in ppts of groups’ postdicted self-assessed mean confidence ratings closely approximate the groups’ actual demonstrated competency mean scores (SLCI). In group sizes of 200 participants, the mean self-assessment accuracy for every group is within ±3 ppts. To achieve such results, researchers must use aligned instruments that produce reliable data as described in Nuhfer, 2015 and Nuhfer et al. 2016.

From Table 1 and Figure 3, we can now understand how the very high correlations in Figure 1B are achievable by using sufficiently large numbers of participants in each group. Figure 3A and 3B and Table 1 employ the same database.

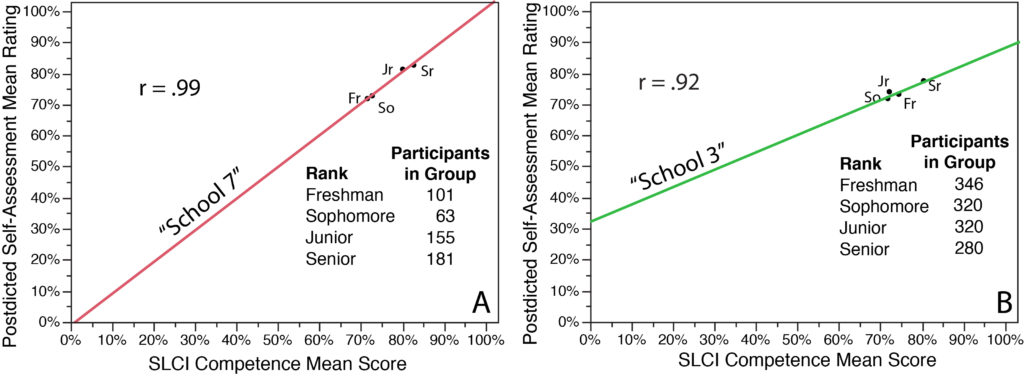

Finally, we verified that we could achieve high correlations like those in Figure 2B in single institutions, even when we examined only the four undergraduate ranks within each. We also confirmed that the rank orderings and best-fit line slopes formed patterns that differed measurably by the institution. Two examples appear in Figure 4. The ordering of the undergraduate ranks and the slope of the best-fit line in graphs such as those in Fig. 4 are surprisingly informative.

Figure 4. Institutional profiles from paired measures of undergraduate ranks. Figure 4A is from a primarily undergraduate, public institution. Figure 4B comes from a public research-intensive university. The correlations remain very high, and the best-fit line slopes and the ordering pattern of undergraduate ranks are distinctly different between the two schools.

In general, steeply sloping best-fit lines in graphs like Figures 1B, 2, and 4A indicate when significant metacognitive growth is occurring together with the development of content expertise. In contrast, nearly horizontal best-fit lines (these do exist in our research results but are not shown here) indicate that students in such institutions are gaining content knowledge through their college experience but are not gaining metacognitive skill. We can use such information to guide the assessment stage of “closing the loop.” The information provided does help taking informed actions. In all cases where undergraduate ranks appear ordered out of sequence in such assessments (as in Fig. 1B and Fig. 4B), we should seek understanding why this is true.

In Figure 4A, “School 7” appears to be doing quite well. The steeply sloping line shows clear growth between lower division and upper division undergraduates in both content competence and metacognitive ability. Possibly, the school might want to explore how it could extend gains of the sophomore and senior classes. “School 3” (Fig. 4B) probably should want to steepen its best-fit line by focusing first on increasing self-assessment skill development across the undergraduate curriculum.

We recently used paired measures of competence and confidence to understand the effects of privilege on varied ethnic, gender, and sexual orientation groups within higher education. That work is scheduled for publication by Numeracy in July 2019. We are next developing a peer-reviewed journal article to use the paired self-assessment measures on groups to understand institutions’ educational impacts on students. This blog entry offers a preview of that ongoing work.

Notes. This blog follows on from earlier posts: Measuring Metacognitive Self-Assessment – Can it Help us Assess Higher-Order Thinking? and Collateral Metacognitive Damage, both by Dr. Ed Nuhfer.

The research reported in this blog distills a poster and oral presentation created by Dr. Edward Nuhfer, CSU Channel Islands & Humboldt State University (retired); Dr. Steven Fleisher, California State University Channel Islands; Rachel Watson, University of Wyoming; Kali Nicholas Moon, University of Wyoming; Dr. Karl Wirth, Macalester College; Dr. Christopher Cogan, Memorial University; Dr. Paul Walter, St. Edward’s University; Dr. Ami Wangeline, Laramie County Community College; Dr. Eric Gaze, Bowdoin College, and Dr. Rick Zechman, Humboldt State University. Nuhfer and Fleisher presented these on February 26, 2019 at the American Association of Behavioral and Social Sciences Annual Meeting in Las Vegas, Nevada. The poster and slides from the oral presentation are linked in this blog entry.